I publish a monthly email newsletter with personal updates and interesting things I read or learned that month. The latter is archived below. If you’d like to be added to the newsletter, email me.

February 2026 Roundup

Plenty was written on the Pentagon's falling out with Anthropic this week. This was one of the more clear-headed articles.

Lawfare, Alan Z. RozenshteinBut the deeper problem isn't who's right in this negotiation; it's that the negotiation is happening at all. The terms governing how the military uses the most transformative technology of the century are being set through bilateral haggling between a defense secretary and a startup CEO, with no democratic input and no durable constraints. Congress should be setting these rules. And it should do so in a hurry.

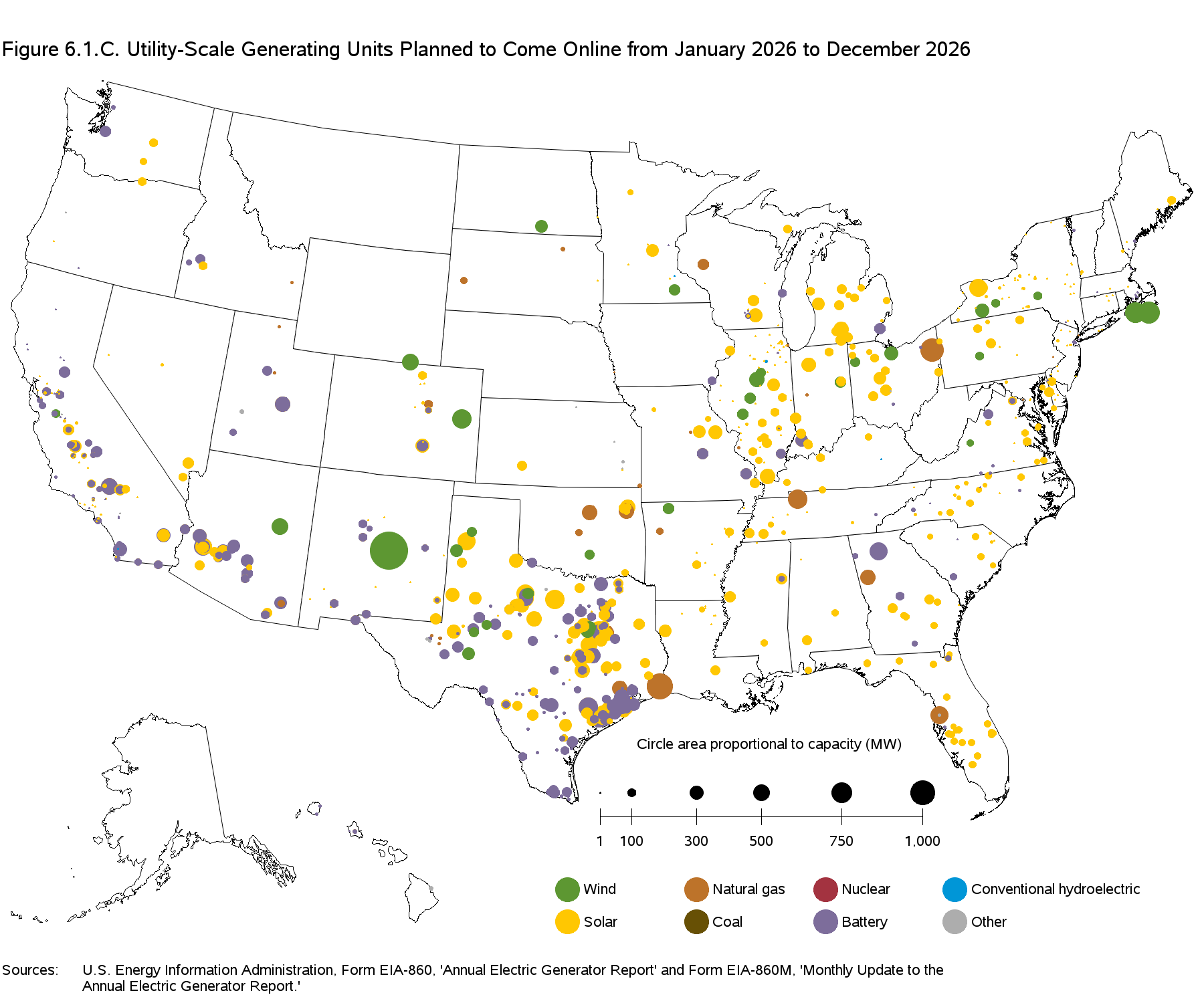

A good visualization of grid-scale energy being brought online in 2026.

A two-part series considering the policy implications of recursively improving AI systems.

I agree with Dean that policymakers should seriously discuss recursive self-improvement. However, his last two premises are weak. "Competence" is too vague to be useful. Models have exceeded human "competence" on narrowly defined math and coding tasks for 12 months. It shouldn't be a surprise they write most code at these companies. However, without metacognitive monitoring and self-directed planning, these look more like another step in the evolution of compilers, IDEs, and build tools than a drop-in labor replacement. I'm not saying that won't happen, just that he's missing a premise.There is one assumption I’ll ask you to make with me, which is that substantial automation of AI research is a near-term possibility. This requires believing a few things. First, that AI research and engineering is substantively composed of work like: finding optimizations in various complex software systems; designing and testing experiments for AI model training and posttraining; and creating software interfaces to expose AI model capabilities to users. Second, that a great deal of this work is essentially reducible to the engineering of software. Third, that AI models, while not yet geniuses, are reaching quite high levels of human competence. Fourth, that frontier lab leadership and staff are serious when they describe AI research automation as a near-term goal, and that frontier lab research staff are telling the truth when they say that AI is already writing a large fraction of their code.

On Recursive Self-Improvement (Part 1), Dean Ball

The vegetables in VeggieTales are not Christian.

Scott Alexander's rebuttal to the "stochastic parrot" argument

Next-Token Predictor Is An AI's Job, Not Its Species, Scott AlexanderRecently, they [Anthropic] explored how Claude predicts where a line break will be in a page of text. Since line break is a token, this is literally a next-token prediction task. The answer was: the AI represents various features of the line breaking process as one-dimensional helical manifolds in a six-dimensional space, then rotates the manifolds in some way that corresponds to multiplying or comparing the numbers that they’re representing ... Next-token prediction created this system, but the system itself can involve arbitrary choices about how to represent and manipulate data.

Film students can't sit through films.

A lot of discussion around this article focused on attention span. I think it's as much a sign of decreasing effort/standards. If I had to guess, I'd say fewer than 50 percent of students at IU read the assigned books in English classes.At Indiana University, where Erpelding worked until 2024, professors could track whether students watched films on the campus’s internal streaming platform. Fewer than 50 percent would even start the movies, he said, and only about 20 percent made it to the end

The Atlantic, Rose Horowitch

Bacteria as a treatment for cancer. An idea I hadn't heard before.

These are mice studies, so take with a grain of salt.Ewingella americana exhibited remarkably potent cytotoxic activity with selective tumor-targeting ability characteristic of facultative anaerobic bacteria. Mechanistic investigations revealed that E. americana functions through a dual-action mechanism: direct tumor cell killing and robust activation of host immunity, leading to enhanced T cell, neutrophil, and B cell-mediated tumor attack. Treatment with E. americana significantly outperformed standard therapies, including anti-PD-L1 antibody and doxorubicin, in tumor regression studies.

Discovery and characterization of antitumor gut microbiota from amphibians and reptiles, Iwata et al.

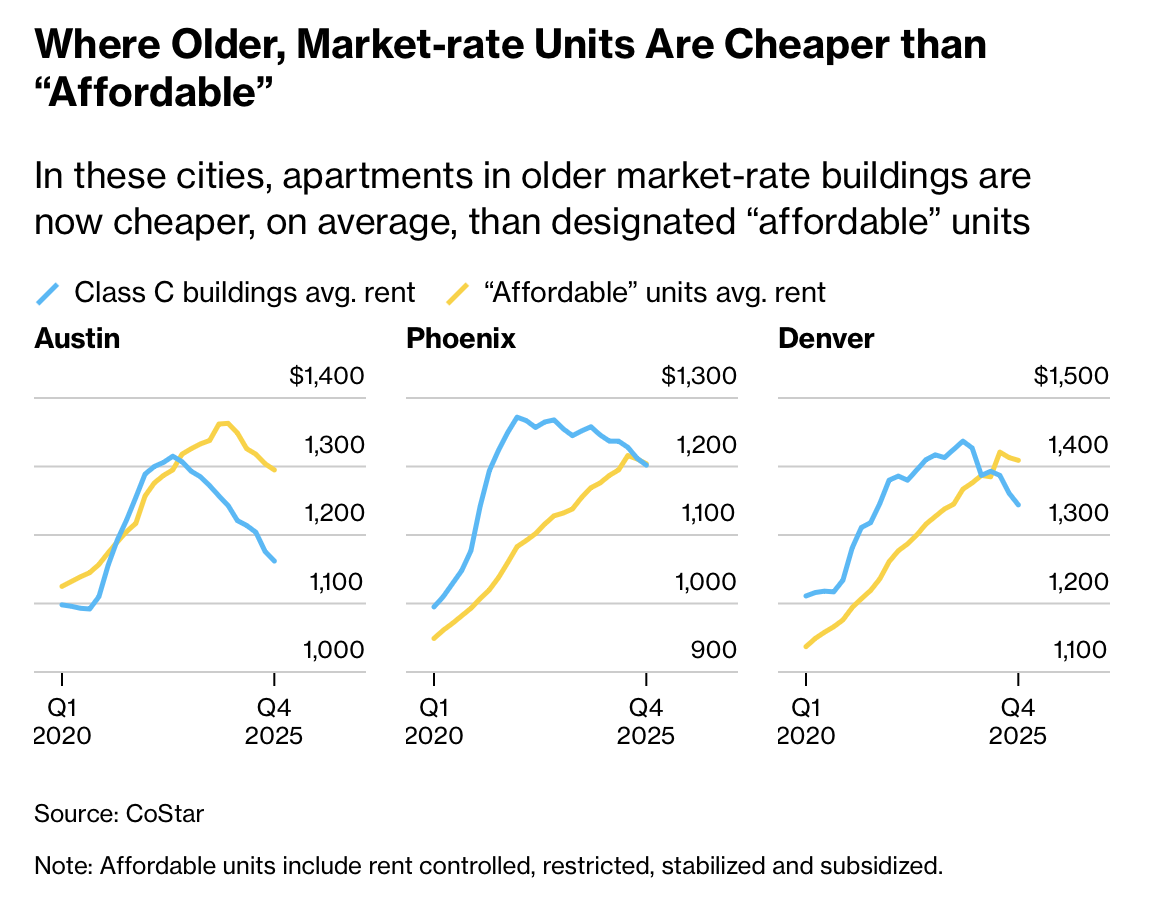

Luxury apartment construction is bringing down rent.

Luxury Apartments Are Bringing Rent Down in Some Big Cities, Bloomberg